openclaw很火,于是想在家里的NAS上部署一个。

由于openclaw有本地的权限,绝对不敢在NAS的系统下直接运行,因此需要一个隔离的环境来部署,以下是折腾记录。

Docker部署

第一反应是Docker容器化部署,毕竟我NAS上的大部分服务都是跑在容器里的。

从github上看,它是支持的,运行docker-setup.sh就可以。阅读相关文档并查看这个脚本,发现可以配置这几个环境变量来实现配置、workspace目录的定制:

OPENCLAW_WORKSPACE_DIR=/path/to/openclaw/workspace

OPENCLAW_CONFIG_DIR=/path/to/openclaw/config_dir

分别指向workspace(包含README、MEMORY、SKILL等md)与openclaw本身的配置(主要是openclaw.json)

同时容器编译、运行时可以加个代理,可以在Dockerfile里添加:

ENV https_proxy=http://<proxy>:<port>

然后直接执行:

./docker-setup.sh

就可以一路完成容器镜像的编译并自动启动容器,并自动运行了onboard了。

看上去很美好,然而。。。

Docker部署的坑

坑一:cli容器无法连接gateway

在openclaw的docker-compose.yaml里,默认会有两个容器,一个是openclaw-gateway,另一个是openclaw-cli。gateway的容器是长期跑服务的,另一个容器是用于cli工具的。

然而,当我们看到gateway容器跑得好好的,也映射好了端口,但是用cli工具的容器时,会直接遇到这样的报错:

Error: gateway closed (1006 abnormal closure (no close frame)): no close reason

Source: local loopback

显然这是一个网络问题:两个容器,gateway使用了loopback来启动服务,在另一个容器想使用127.0.0.1:18789来连接gateway,显然是连不上的。。。

解决方案是在docker-compose.yml的cli服务中添加 network_mode: "service:openclaw-gateway",让它使用gateway的网络,这样cli才能连上gateway,可以“正常”地检查health

docker compose run --rm openclaw-cli health

这个改动已经提了PR:https://github.com/openclaw/openclaw/pull/13941

坑二:gateway里没有openclaw

openclaw在运行时,接收到用户的指令,很多时候会调用各类cli工具来执行。

比如说我们让openclaw来创建一个定时的服务,它会调用openclaw cron这样的命令。然后我们会在日志中看到:

openclaw command not found

去gateway的容器里检查,发现确实没有任何openclaw或者openclaw-cli这样的命令行工具!

实际上在容器部署中:

- gateway与cli工具共享一个镜像

- 两个service的command/entrypoint不一样

- 但是镜像中确实没有

openclaw这个cli工具,只有openclaw-cli

本质上这应该是一个容器化部署的bug,但考虑到潜在的其它问题,还是放弃这个方式了。

虚拟机部署

不使用容器的话,最适合的就是使用虚拟机来部署了,本来我的NAS上也跑着qemu。

不过最近听说了Ubuntu的multipass,正好可以试试。

multipass的安装与配置

multipass只支持snap,我需要把它的数据目录放到非rootfs的地方,有一些特别需要注意配置的地方,主要是它能使用的目录必须是/mnt,/media这样的目录,参考Configure where Multipass stores external data

# 安装multipass

sudo snap install multipass

# 配置external data的目录为 /mnt/storage/multipass/

sudo snap stop multipass

sudo snap connect multipass:removable-media

mkdir -p /mnt/storage/multipass/

sudo chown root /mnt/storage/multipass/

# 配置systemd unit的环境变量

sudo mkdir /etc/systemd/system/snap.multipass.multipassd.service.d/

sudo tee /etc/systemd/system/snap.multipass.multipassd.service.d/override.conf <<EOF

[Service]

Environment=MULTIPASS_STORAGE=/mnt/storage/multipass/

EOF

# reload systemd

sudo systemctl daemon-reload

# Copy 数据

sudo cp -r /var/snap/multipass/common/data/multipassd /mnt/storage/multipass/data

sudo cp -r /var/snap/multipass/common/cache/multipassd /mnt/storage/multipass/cache

# 启动multipass服务

sudo snap start multipass

这样multipass的数据就放到/mnt/storage/multipass/目录了,而不会占用rootfs的空间。

multipass虚机的启动与配置

最简单的方式一句话启动

multipass launch --name openclaw-vm

不过这样启动的虚拟机的core/内存/磁盘空间都是默认的,不够安装openclaw,所以还需要额外配置磁盘、内存。也很简单。

multipass stop openclaw-vm

multipass set local.openclaw-vm.cpus=2

multipass set local.openclaw-vm.disk=64G

multipass set local.openclaw-vm.memory=4G

multipass start openclaw-vm

这样就启动好一个2core 4G内存 64G磁盘的虚拟机了。

然后我要把之前Docker部署的openclaw的配置项都导入虚拟机里,发现也很方便,一条命令,可以直接在虚拟机里mount好/extra/openclaw,直接可用。

multipass mount /mnt/path/to/openclaw openclaw-vm:/extra/openclaw

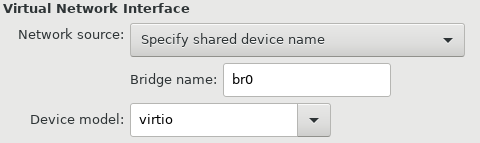

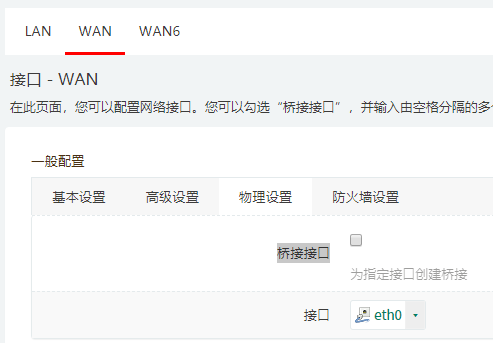

为了让虚拟机更好地被本地访问,添加bridge,这样可以有一个lan IP

multipass set local.openclaw-vm.bridged=true

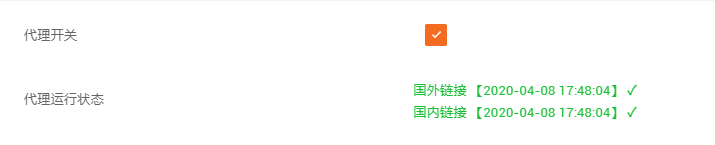

再额外配置一个科学上网的网关和nameserver,就可以开始玩耍openclaw了。

# cat /etc/netplan/50-cloud-init.yaml

network:

version: 2

# 省略一些配置...

routes:

- to: default

via: 192.168.1.xxx

metric: 50

nameservers:

addresses: [192.168.1.xxx]

dhcp4-overrides:

use-dns: false

use-routes: false

openclaw的安装与配置

在虚拟机里由于是个干净的环境,并且已经配置好科学上网了,安装与配置就很简单直接。

# 确保有build-essential

sudo apt update && sudo apt install -y build-essential

# 下载、安装openclaw

wget https://openclaw.ai/install.sh

bash install.sh

# 把.openclaw link到 /extra/openclaw,配置需要的环境变量

systemctl --user stop openclaw-gateway.service

ln -s /extra/openclaw ~/.openclaw

EDITOR=vim systemctl --user edit openclaw-gateway.service --full

# 添加 Environment=OPENCLAW_GATEWAY_HOST=0.0.0.0

systemctl --user start openclaw-gateway.service

这样服务就启动好了,并在虚拟机里监听了所有端口,可以正常使用了。

可以用这几个命令检查一下状态:

openclaw health

openclaw status

也可以在本地tui直接进行测试

openclaw tui

其它

- 对于其它openclaw.json本身的配置,网上有很多,主要注意的是

models.providers.xxx只是配置了provider,还需要在agent.defaults.models里也配置好,这样自定义的模型API才可以被正常使用。 - Telegram bot的创建、使用非常方便,推荐这个。